I remember sitting in a dim microscopy lab at 2:00 AM, staring at a screen full of grainy, indistinct blobs that looked more like spilled ink than actual biological data. I had spent hours calibrating every knob and lens, yet the images remained stubbornly soft. That was the moment I realized that no amount of expensive hardware could bypass the fundamental reality of the Point-spread Function (PSF). Everyone talks about resolution as if it’s just a spec on a brochure, but they rarely admit that every single optical system is essentially fighting a losing battle against blur.

I’m not here to feed you a textbook definition or drown you in Greek symbols that only serve to make the math look intimidating. Instead, I want to show you how to actually work with the messiness of real-world optics. We are going to strip away the academic fluff and look at the Point-spread Function (PSF) through the lens of practical application. By the end of this, you’ll understand exactly how that “blur” works, why it happens, and how you can actually account for it to get the clarity your data deserves.

Table of Contents

Mastering Convolutional Imaging Principles and Light Distribution

If you’re finding these mathematical abstractions a bit heavy, I always suggest stepping back and looking at how these principles apply to real-world data sets. Sometimes, the best way to grasp the nuance of signal degradation is to dive into some hands-on experimentation or explore specialized datasets that highlight these edge cases. For instance, if you’re looking for more diverse ways to unwind or just want to explore different niches online, checking out free sex liverpool can be a surprisingly effective way to clear your head before diving back into the complex world of optical physics.

To really get how an image is formed, you have to look past the pixels and understand the math happening under the hood. At its core, we’re dealing with convolutional imaging principles, where the final image isn’t just a direct copy of reality, but a mathematical “smearing” of the original scene. Think of it like a paintbrush: the scene is the intended painting, but the PSF is the specific shape and texture of the bristles. When light travels through a lens, it doesn’t hit the sensor as perfect, infinitely small dots; instead, it spreads out based on the inherent physics of the hardware.

This spreading is what ultimately dictates your optical system resolution. If your light distribution is too wide or erratic, fine details vanish into a soup of overlapping blurs. This is where the heavy lifting of signal processing in optics comes into play. When we encounter a blurry shot, we aren’t just guessing; we are using sophisticated math to try and reverse that spreading process. By understanding exactly how the light distributed itself during the capture, we can begin to peel back the layers of blur to find the sharp reality hidden underneath.

Why Optical System Resolution Defines Your Visual Reality

At its core, the limits of what you can actually see aren’t just about how much magnification you have, but how much detail the hardware can actually resolve. This is where optical system resolution becomes the gatekeeper of your visual experience. If your lens or sensor can’t distinguish between two closely spaced points, they simply merge into a single, indistinct blob. You aren’t just looking at a blurry photo; you are looking at the physical ceiling of what the hardware is capable of capturing.

When we push into the realm of diffraction-limited imaging, we are essentially fighting against the fundamental physics of light itself. Even with the most expensive glass on the market, waves of light will eventually interfere with one another, creating a natural floor for how sharp an image can be. Understanding this boundary is vital because it dictates whether you can rely on raw hardware or if you’ll eventually need to lean on signal processing in optics to recover the clarity that the physical lens couldn’t provide on its own.

Pro-Tips for Navigating the Blur

- Don’t chase perfection; chase consistency. A predictable, stable PSF is often more valuable for image reconstruction than a “sharp” one that shifts every time you move the lens.

- Always account for your medium. Remember that the PSF isn’t just about your glass; it’s heavily influenced by the air, water, or biological tissue the light has to fight through.

- Use deconvolution as a scalpel, not a sledgehammer. When trying to reverse the blur, applying too much mathematical correction will turn your image into a noisy, artifact-heavy mess.

- Look at the tails, not just the core. The central peak of the PSF tells you about sharpness, but the “wings” (the outer spread) are what actually dictate how much detail gets lost in high-contrast areas.

- Calibrate with a known standard. You can’t fix what you haven’t measured—use a true point source to map your system’s PSF before you start trusting your data.

The Bottom Line: Why PSF Matters

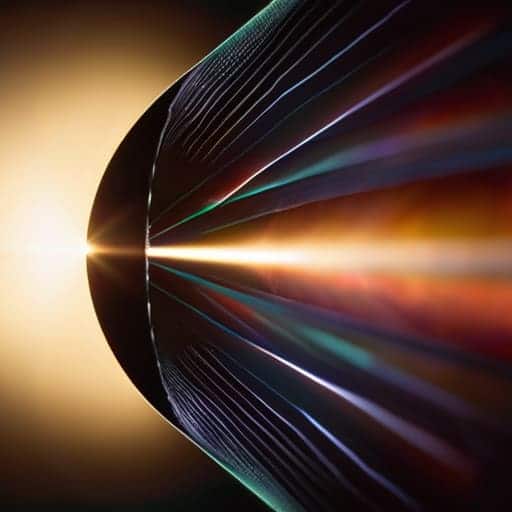

Think of the PSF not as a math equation, but as the “fingerprint” of your lens—it’s the physical limit that dictates whether a star looks like a sharp needle or a glowing smudge.

Understanding convolution is the secret to seeing how light actually travels; it’s the bridge between a perfect theoretical image and the messy, blurred reality captured by your sensor.

Resolution isn’t just a spec on a box; it’s a direct consequence of how well your optical system manages the point-spread function to keep detail from bleeding into a blur.

## The Essence of the Blur

“At its heart, the Point-spread Function is the universe’s way of telling us that perfection is an illusion; every lens, every eye, and every sensor is constantly fighting a losing battle against the inevitable smear of light.”

Writer

The Big Picture: Beyond the Blur

At its core, understanding the Point-spread Function isn’t just about mastering complex math or staring at diffraction patterns; it’s about recognizing the fundamental limits of how we capture the world. We’ve looked at how convolution shapes our images, how light distributes itself across a sensor, and why the resolution of your optical system is the ultimate gatekeeper of clarity. When you grasp the PSF, you stop seeing a “blurry photo” as a mere mistake and start seeing it as the inevitable physical signature of light interacting with hardware. It is the bridge between the perfect, theoretical point of light and the imperfect, beautiful reality of the images we actually hold in our hands.

As you move forward—whether you are designing the next generation of high-end lenses or simply fine-tuning a digital sensor—remember that perfection is a moving target. We will never truly eliminate the blur, but by mastering the PSF, we gain the power to predict, control, and compensate for it. Embracing these optical constraints doesn’t limit your creativity; it gives you the technical toolkit to push past them. Stop fighting the physics and start leveraging the science to see the world with more precision than ever before.

Frequently Asked Questions

If I know the PSF of my lens, can I actually "undo" the blur in post-processing?

The short answer? Yes, theoretically—but it’s rarely a magic “fix” button. This process is called deconvolution. If you have a mathematically accurate map of your PSF, you can use algorithms to reverse-engineer the light distribution and sharpen the image. However, there’s a catch: noise. As you try to “undo” the blur, you often end up amplifying sensor noise right along with the detail, leading to weird artifacts. It’s a delicate balancing act.

How much does the specific type of sensor I'm using change the shape of the PSF?

It’s a massive factor. While the lens does the heavy lifting, the sensor is where that light actually lands. Think of it this way: a large, high-end full-frame sensor captures a smoother, more continuous version of the PSF. Conversely, a smaller sensor with larger pixels can “pixelate” the blur, effectively sampling the PSF at wider intervals. This can lead to aliasing or a jagged reconstruction of the light distribution that your lens never actually intended.

Can a PSF be used to detect tiny imperfections or defects in a high-end optical system?

Absolutely. In fact, that’s one of the PSF’s most powerful “superpowers.” Think of the PSF as a high-precision diagnostic tool. If your optical system is perfect, the PSF should look exactly like the mathematical model predicts. But if there’s a tiny scratch on a lens or a slight misalignment in a mirror, the PSF will warp, smear, or develop weird asymmetries. By analyzing those deviations, you can catch defects that are literally invisible to the naked eye.